Bridge the gap between data chaos and clarity with the strategic use of Power BI Logical Functions

The missing piece to decoding our data and unlocking its full potential is often the strategic application of DAX Logical Functions. These functions are pivotal in dissecting complex datasets, applying business logic, and enabling a nuanced approach to data analysis that goes beyond surface-level insights. Better understanding DAX Logical Functions allows us to create more sophisticated data models that respond with agility to analytical queries, turning abstract numbers into actionable insights.

In this post we will explore this group of functions and how we can leverage them within Power BI. We will dive in and see how we can transform our data analysis from a mere task into an insightful journey, ensuring that every decision is informed, every strategy is data-driven, and every report illuminates a path to action.

The Logical Side of DAX: Unveiling Power BI’s Brain

Diving into the logical side of DAX is where everything begins to become clear. Logical functions are the logical brain behind Power BI’s ability to make decisions. Just like we process information to decide between right and wrong, DAX logical functions sift through our data to determine truth values: true or false.

Functions such as IF, AND, OR, NOT, and TRUE/FALSE, are the building blocks for creating dynamic reports. These functions allow us to set up conditions that our data must meet, enabling a level of interaction and decision-making that is both powerful and nuanced. Whether we are determining if sales targets were hit or filtering data based on specific criteria, logical functions are our go-to tools for making sense of the numbers.

For details on these functions and many others visit the DAX Function Reference documentation.

DAX Function Reference – Logical Functions

Learn more about: DAX Logical Functions

The logical functions in DAX can go far beyond the basics. The real power happens when we start combining these functions to reflect complex business logic. Each function plays its role and when used in combination correctly we can implement complex logic scenarios.

For those eager to start experimenting there is a Power BI report pre-loaded with the same data used in this post ready for you! So don’t just read, follow along and get hands-on with DAX in Power BI. Get a copy of the sample data report here:

GitHub – Power BI DAX Function Series: Mastering Data Analysis

This dynamic repository is the perfect place to enhance your learning journey.

Understanding DAX Logical Functions: A Beginner’s Guide

When starting our journey with DAX logical functions we will begin to understand the unique role of each function within our DAX expressions. Among these functions, the IF function stands out as the decision-making cornerstone.

The IF function tests a condition, returning one result if the condition is TRUE, and another if FALSE. Here is its syntax.

IF(logical_test, value_if_true, value_if_false)

The logical_test parameter is any value or expression that can be evaluated to TRUE or FALSE, and then the value_if_true is the value that is returned if logical_test is TRUE, and the value_if_false is optional and is the value returned when logical_test is FALSE. When value_if_false is omitted, BLANK is returned when the logical_test is FALSE.

Let’s say we want to identify which sales have an amount that exceeds $5,000. To do this we can add a new calculated column to our Sales table with the following expression.

Sales Target Categorization =

IF(

Sales[Amount] > 5000,

"Above Target",

"Below Target"

)

This expression will evaluate each sale in our Sales table, labeling each sale as either “Above Target” or “Below Target” based on the Sales[Amount].

The beauty of starting our journey with IF lies in its simplicity and versatility. While we continue to explore logical functions, it won’t be long before we encounter TRUE/FALSE.

As we saw with the IF function these values help guide our DAX expressions, they are also their own DAX function. These two functions are the DAX way of saying yes (TRUE) or no (FALSE), often used within other logical functions or conditions to express a clear binary choice.

These functions are as straightforward as they sound and do not require any parameters. When used with other functions or conditional expressions we typically use these to explicitly return TRUE or FALSE values.

For example, we can create another calculated column to check if a sale is a high value sale with an amount greater than $9,000.

High Value Sale =

IF(

Sales[Amount] > 9000,

TRUE,

FALSE

)

This simple expression checks if the sales amount exceeds $9,000, marking each record as TRUE if so, or FALSE otherwise.

Together IF and TRUE/FALSE form the foundation of logical expressions in DAX, setting the stage for more complex decision-making analysis. Think of these functions as essential for our logical analysis, but just the beginning of what is possible.

The Gateway to DAX Logic: Exploring IF with AND, OR, and NOT

The IF function is much more than just making simple true or false distinctions; it helps us unlock the nuanced layers of our data, guiding us through the paths our analysis can take. By effectively leveraging this function we can craft detailed narratives from our datasets.

We are tasked with setting sales targets for each region. The goal is to base these targets on a percent change seen in the previous year. Depending on whether a region experienced a growth or decline, the sales target for the current year is set accordingly.

Region Specific Sales Target =

IF(

HASONEVALUE(Regions[Region]),

IF(

[Percent Change(CY-1/CY-2)] < 0,

[Total Sales (CY-1)]*1.1,

[Total Sales (CY-1)]*1.2

),

BLANK()

)

In this measure we make use of three other measures within our model. We calculate the total sales for the previous year (Total Sales (CY-1)), and the year before that (Total Sales (CY-2)). We then determine the percentage change between these two values.

If there is a decline (negative percent change), we set the current year’s sales target to be 10% higher than the previous year’s sales, indicating a more conservative goal. Conversely, if there was growth (positive percent change), we set the current year target 20% higher to keep the momentum going.

As we dive deeper, combining IF with functions like AND, OR, and NOT we begin to see the true flexibility of these functions in DAX. These logical operators allow us to construct more intricate conditions, tailoring our analysis to very specific scenarios.

The operator functions are used to combine multiple conditions:

- AND returns TRUE if all conditions are true

- OR returns TRUE if any condition is true

- NOT returns TRUE if the condition is false

Let’s craft a measure to determine which employees are eligible for a quarterly bonus. The criterion for eligibility is twofold: the employee must have made at least one sale in the current quarter, and their average sale amount during this period must exceed the overall average sale amount.

To implement this, we first need to calculate the average sales and compare each employee’s average sale against this benchmark. Additionally, we check if the employee has sales recorded in the current quarter to qualify for the bonus.

Employee Bonus Eligibility =

VAR CurrentQuarterStart = DATE(YEAR(TODAY()), QUARTER(TODAY()) * 3 - 2, 1)

VAR CurrentQuarterEnd = EOMONTH(DATE(YEAR(TODAY()), QUARTER(TODAY()) * 3, 1), 0)

VAR OverallAverageSale = CALCULATE(AVERAGE(Sales[Amount]), ALL(Sales))

VAR EmployeeAverageSale = CALCULATE(AVERAGE(Sales[Amount]), FILTER(Sales, Sales[SalesDate] >= CurrentQuarterStart && Sales[SalesDate] = CurrentQuarterStart && Sales[SalesDate] 0

RETURN

IF(

AND(HasSalesCurrentQuarter, EmployeeAverageSale > OverallAverageSale),

"Eligible for Bonus",

"Not Eligible for Bonus"

)

In this measure we define the start and end dates of the current quarter, then we calculate the overall average sale across all data for comparison. We then determine each employee’s average sale amount and check if the employee has made any sales in the current quarter to qualify for evaluation.

If an employee has active sales and their average sale amount during the period is above the overall average, they are deemed “Eligible for Bonus”. Otherwise, they are “Not Eligible for Bonus”.

This example begins to explore how we can use IF in conjunction with AND to streamline business logic into actionable insights. These logical functions provide a robust framework for asking detailed questions about our data and receiving precise answers, allowing us to uncover the insights hidden within the numbers.

Beyond the Basics: Advanced Logical Functions in DAX

As we venture beyond the foundational logical functions we step into a world where DAX’s versatility shines, especially when dealing with complex data models in Power BI. More advanced logical functions such as SWITCH and COALESCE bring a level of clarity and efficiency that is hard to match with just basic IF statements.

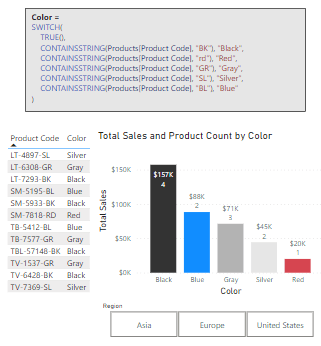

SWITCH Function: Simplifying Complex Logic

The SWITCH function is a more powerful version of the IF function and is ideal for scenarios where we need to compare a single expression against multiple potential values and return one of multiple possible result expressions. This function helps us provide clarity by avoiding multiple nested IF statements. Here is its syntax.

SWITCH(expression, value, result[, value, result]...[, else])

The expression parameter is a DAX expression that returns a single scalar value and is evaluated multiple times depending on the context. The value parameter is a constant value that is matched with the results of expression, the result is any scalar expression to be evaluated if the result of expression matches the corresponding value. Finally, the else parameter is an expression to be evaluated if the result of expression does not match any value arguments.

Let’s explore. We have a scenario where we want to apply different discount rates to products based on their categories (Smartphone, Laptop, Tablet). We could achieve this by using the following expression for a new calculated column, which uses nested IFs.

Product Discount Rate (IF) =

IF(

Products[Product]="Smartphone", 0.10,

IF(Products[Product]="Laptop", 0.15,

IF(Products[Product]="Tablet", 0.20,

0.05

)

)

)

Although this would achieve our goal, the use of nested if statements can make the logic of the calculated column hard to read, understand, and most importantly hard to troubleshoot.

Now, let’s see how we can improve the readability and clarity by implementing SWITCH to replace the nested IF statements.

Product Discount Rate =

SWITCH(

Products[Product],

"Smartphone", 0.10,

"Laptop", 0.15,

"Tablet", 0.20,

0.05

)

The expression simplifies the mapping of each Product to its corresponding discount rate and provides a default rate for categories that are not explicitly listed.

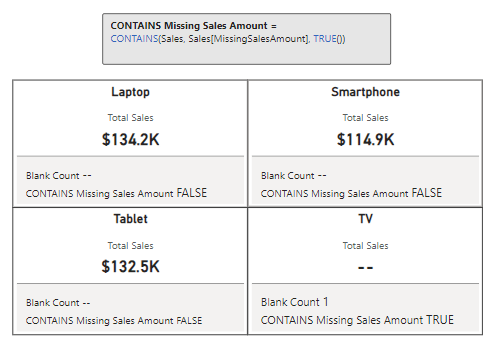

COALESCE Function: Handling Blank or Null Values

The COALESCE function offers a straightforward way to deal with BLANK values within our data, returning the first non-blank value in a list of expressions. If all expressions evaluate to BLANK, then a BLANK value is returned. Its syntax is also straightforward.

COALESCE(expression, expression[, expression]...)

Here, expression can be any DAX expression that returns a scalar value. These expressions are evaluated in the order they are passed to the COALESCE function.

When reporting on our sales data, encountering blanks can sometimes communicate the wrong message. Using COALESCE we can address this by providing a more informative value when there are no associated sales.

Product Sales = COALESCE([Total Sales], "No Sales")

With this new measure if our Total Sales measure returns a blank, for example due to filters applied in the report, COALESCE ensures this is communicated with a value of “No Sales”. This approach can be beneficial for maintaining meaningful communication in our reports. It ensures that our viewers understand the lack of sales being reported, rather than interpreting a blank space as missing or erroneous data.

These logical functions enrich our DAX toolkit, enabling more elegant solutions to complex problems. By efficiently managing multiple conditions and safeguarding against potential errors, SWITCH and COALESCE not only optimize our Power BI models but also enhance our ability to extract meaningful insights from our data.

With these functions, our journey into DAX’s logical capabilities becomes even more exciting, revealing the depth and breadth of analysis we can achieve. Let’s continue to unlock the potential within our data, leveraging these tools to craft insightful, dynamic reports.

Logical Comparisons and Conditions: Crafting Complex DAX Logic

Delving deeper into DAX, we encounter scenarios that demand a blend of logical comparisons and conditions. This complexity arises from weaving together multiple criteria to craft intricate logic that precisely targets our analytical goals.

We touched on logical operators briefly in a previous section, the AND, OR, and NOT functions are crucial for building complex logical structures. Let’s continue to dive deeper into these with some more hands-on and practical examples.

Multi-Condition Sales Analysis

We want to identify and count the number Sales transactions that meet specific criteria: sales above a threshold and within a particular region. To achieve this, we create a new measure using the AND operator to count the rows in our sales table that meet our criteria.

High Value Sales in US (Count) =

COUNTROWS(

FILTER(

Sales,

AND(

Sales[Amount] > 5500,

RELATED(Regions[Region]) = "United States"

)

)

)

This measure filters our Sales table to sales that have an amount greater than our threshold of $5,500 and have a sales region of United States.

Excluding Specific Conditions

We need to calculate total year-to-date sales while excluding sales from a particular region or below a certain amount. We can leverage the NOT function to achieve this.

Sales Excluding Asia and Low Values =

CALCULATE(

SUM(Sales[Amount]),

AND(

NOT(Sales[RegionID] = 3), // RegionID=3 (Asia)

Sales[Amount] > 5500

),

Sales[IsCurrentYear]=TRUE()

)

This measure calculates the sum of the sales amount that are not within our Asia sales region and are above $5,500. Using NOT we exclude sales from the Asia region and we us the AND function to also impose the minimum sales amount threshold.

Special Incentive Qualifying Sales

Our goal is to identify sales transactions eligible for a special incentive based on multiple criteria: sales amount, region, employee involvement, and a temporal aspect of the sales data. Here is how we can achieve this.

Special Incentive Qualifying Sales =

CALCULATE(

SUM(Sales[Amount]),

OR(

Sales[Amount] > 7500,

Sales[RegionID] = 2

),

NOT(

Sales[EmployeeID] = 4

),

Sales[IsCurrentYear] = TRUE()

)

The OR function is used to include sales transactions that either exceed $7,500 or are made in Europe (RegionID = 2) and the NOT function excludes transaction made by an employee (EmployeeID = 4), who is a manager and exempt from the incentive program. The final condition is that the sale occurred in the current year.

The new measure combines logical tests to filter our sales data, identifying the specific transactions that qualify for a special incentive under detailed conditions.

By leveraging DAX’s logical functions to construct complex conditional logic, we can precisely target specific segments of our data, uncover nuanced insights, and tailor our analysis to meet specific business needs. These examples showcase just the beginning of what is possible when we combine logical functions in creative ways, highlighting DAX’s robustness and flexibly in tackling intricate data challenges.

Wrapping Up: From Logic to Action

Our journey through the world of DAX logical functions underscores their transformative power within our data analysis. By harnessing IF, SWITCH, AND, OR, and more, we’ve seen how data can be sculpted into actionable insights, guiding strategic decisions with precision. To explore other DAX Logical Functions or get more details visit the DAX Function Reference.

DAX Function Reference – Logical Functions

Learn more about: DAX Logical Functions

Logical reasoning in data analysis is fundamental. It allows us to uncover hidden patterns and respond to business needs effectively, demonstrating that the true value of data lies in its interpretation and application. DAX logical functions are the keys to unlocking this potential, offering clarity and direction in our sea of numbers.

As we continue to delve deeper into DAX and Power BI, let the insights derived from logical functions inspire action and drive decision-making. To explore other functions groups that elevate our data analysis check out the Dive into DAX series, with each post comes the opportunity to enhance your data analytics and Power BI reports.

Dive into DAX: Breaking Down DAX Functions in Power BI

Explore the intricate landscape of DAX in Power BI, revealing the potential to enhance your data analytics with every post.

Thank you for reading! Stay curious, and until next time, happy learning.

And, remember, as Albert Einstein once said, “Anyone who has never made a mistake has never tried anything new.” So, don’t be afraid of making mistakes, practice makes perfect. Continuously experiment and explore new DAX functions, and challenge yourself with real-world data scenarios.

If this sparked your curiosity, keep that spark alive and check back frequently. Better yet, be sure not to miss a post by subscribing! With each new post comes an opportunity to learn something new.